This article is part of our reviews of AI research papers, a series of posts that explore the latest findings in artificial intelligence.

IBM’s AI research division has released a 14-million-sample dataset to develop machine learning models that can help in programming tasks. Called Project CodeNet, the dataset takes its name after ImageNet, the famous repository of labeled photos that triggered a revolution in computer vision and deep learning.

While there’s a scant chance that machine learning models built on the CodeNet dataset will make human programmers redundant, there’s reason to be hopeful that they will make developers more productive.

Automating programming with deep learning

In the early 2010s, impressive advances in machine learning triggered excitement (and fear) about artificial intelligence soon automating many tasks, including programming. But AI’s penetration in software development has been extremely limited.

Human programmers discover new problems and explore different solutions using a plethora of conscious and subconscious thinking mechanisms. In contrast, most machine learning algorithms require well-defined problems and a lot of annotated data to develop models that can solve the same problems.

There have been many efforts to create datasets and benchmarks to develop and evaluate “AI for code” systems. But given the creative and open nature of software development, it’s very hard to create the perfect dataset for programming.

The CodeNet dataset

With Project CodeNet, the researchers at IBM have tried to create a multi-purpose dataset that can be used to train machine learning models for various tasks. CodeNet’s creators describe it as a “very large scale, diverse, and high-quality dataset to accelerate the algorithmic advances in AI for Code.”

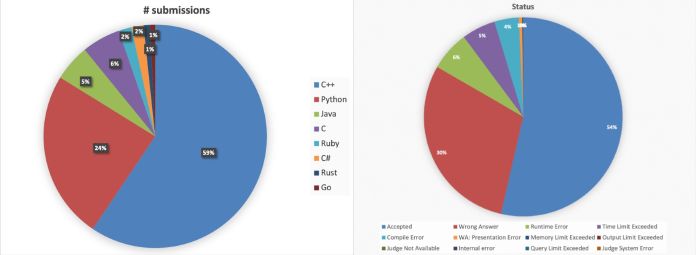

The dataset contains 14 million code samples with 500 million lines of code written in 55 different programming languages. The code samples have been obtained from submissions to nearly 4,000 challenges posted on online coding platforms AIZU and AtCoder. The code samples include both correct and incorrect answers to the challenges.

One of the key features of CodeNet is the amount of annotation that has been added to the examples. Every one of the coding challenges included in the dataset has a textual description along with CPU time and memory limits. Every code submission has a dozen pieces of information, including the language, the date of submission, size, execution time, acceptance, and error types.

The researchers at IBM have also gone through great effort to make sure the dataset is balanced along different dimensions, including programming language, acceptance, and error types.

Programming tasks for machine learning

CodeNet is not the only dataset to train machine learning models for programming tasks. But a few characteristics that make it stand out. First is the sheer size of the dataset, including the number of samples and the diversity of the languages.

But perhaps more important is the metadata that goes with the coding samples. The rich annotations added to CodeNet make it suitable for a diverse set of tasks as opposed to other coding datasets that are specialized for specific programming tasks.

There are several ways CodeNet can be used to develop machine learning models for programming tasks. One is language translation. Since each coding challenge in the dataset contains submissions of various programming languages, data scientists can use it to create machine learning models that translate code from one language to another. This can be handy for organizations that want to port old code to new languages and make them accessible to newer generations of programmers and maintainable with new development tools.

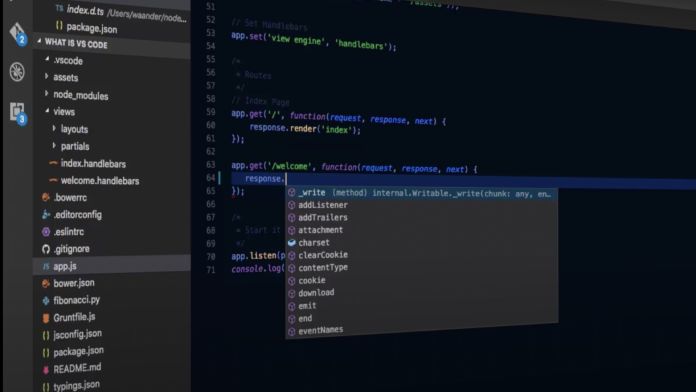

CodeNet can also help to develop machine learning models for code recommendation. Recommendation tools could be as simple as autocomplete-style models that finish the current line of code to more complex systems that write full functions or blocks of code.

Since CodeNet has a wealth of metadata about memory and execution-time metrics, data scientists can also use it to develop code optimization systems. Or they can use the error-type metadata to train machine learning systems that flag potential flaws in source code.

A more advanced use case that would be interesting to see is code generations. CodeNet is a rich library of textual descriptions of problems and their corresponding source code. There have already been several examples of developers using advanced language models such as GPT-3 to generate code from natural language descriptions. It will be interesting to see whether CodeNet can help finetune these language models to become more consistent in code generation.

The researchers at IBM have already conducted several experiments with CodeNet, including code classification, code similarity evaluation, and code completion. The deep learning architectures they used include simple multi-layer perceptrons, convolutional neural networks, graph neural networks, and Transformers. The results, reported in a paper that details Project CodeNet, show that they have been able to obtain above 90-percent accuracy in most tasks. (Though it’s worth noting that evaluating accuracy in programming is a bit different from image classification and text generation, where minor errors might result in awkward but acceptable results.)

A monstrous engineering effort

The engineers at IBM carried out a complicated software and data engineering effort to curate the CodeNet dataset and develop its complementary tools.

First, they had to gather the code samples from AIZU and AtCoder. While one of them had an application programming interface that made it easy to obtain the code, the other had no easy-to-access interface and the researchers had to develop tools that scrapped the data from the platform’s web pages and decomposed it into a tabular format. Then, they had to manually merge the two datasets into a unified schema.

Next, they had to develop tools to cleanse the data by identifying and removing duplicates and samples that had a lot of dead code (source code that is not executed at runtime).

They also developed preprocessing tools that will make it easier to train machine learning models on the CodeNet corpus. These tools include tokenizers for different programming languages, parse trees, and a graph representation generator for use in graph neural networks.

All these efforts are a reminder of the huge human effort needed to create efficient machine learning systems. Artificial intelligence is not ready to replace programmers (at least for the time being). But it might change the kind of tasks that require the efforts and ingenuity of human programmers.

You must be logged in to post a comment.