There are various tasks that require dropping some information from the image that is comprised by the pixels. In such a requirement, dithering is a process that can help in the reduction of the size without losing the quality and much information of the data and helps in minimizing the quantization error. In this article, we take a deep dive into the dithering in image processing and how it helps in improving the quality of data. We will go through the various dithering approaches and will understand them with examples. The major points to be discussed in this article are listed below.

Table of Contents

Learn about Intel's Edge Computing>>

- About Quantization

- What is a Quantization Error?

- What is Dithering?

- Various Dithering Processes

- Random Dithering

- Average Dithering

- Ordered Dithering

- Floyd-Steinberg Dithering

- Application of Dithering

Let’s start the discussion by understanding what quantization is.

What is Quantization?

The process in which a continuous range of values gets converted into a range of discrete values is called quantization. We can understand it simply by taking the example of the conversion of analogue to digital where digital values are a range of discrete values to represent the original analogue signal. Here we have got an idea of quantization. In many types of data, we are required to perform quantization. Audio and image data are the most frequent type of data where the quantization process is required. In this article, we will see how these things work on the image data. Let’s have a look at how it works in image processing.

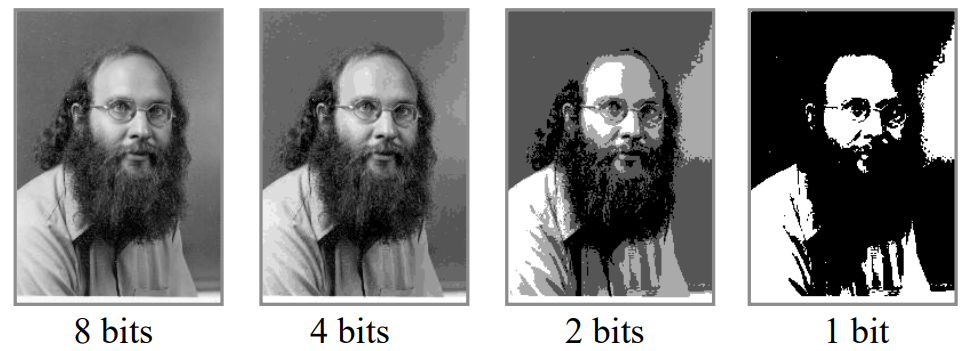

In image processing, quantization can be considered as determining the parts of an image that can be discarded or consolidated with minimum subjective loss. However, quantization of an image can cause some losses because, ultimately, it is the process of reducing the quality of images. If we apply colour quantization in an image, then only colours that can represent the image are selected by losing the rest of the colours. For example, the process of transforming an image into a gif requires reducing the colours of the image to 256. The below image is the representation of the quantization process on an image.

Here we can see that in quantization, the size of the images and quality representation of the image is reduced. Here we have seen how the quantization of the images causes the loss of the information and this can be considered as the quantization error. Let’s have an introduction to the quantization error.

What is a Quantization Error?

In the introduction of quantization, we have seen how the size of any image is reduced. In many cases, we can consider the image data as the sequential or signal type of data. In quantization, it happens that the size of the original signal or the image is much larger than the size of the significant bits of the signals or the pixels of the image.

Let’s consider the image data as a signal. While converting the signal from analogue format to digital format, we lose various information. The accuracy of the digital signal depends on the resolution of the quantization. Due to conversion, we may find a difference between the actual analogue value and approximate digital value. This difference can be considered as the quantization error.

When we talk about image resolution, we find there are three types of resolution:

- Intensity Resolution: It can be defined as the colours of the image pixel that has depth, stored in bits.

- Spatial Resolution: It can be defined as the physical dimension (width x height) that represents a pixel of the image.

- Temporal Resolution: It can be defined as the discrete resolution of pixels in the image with respect to time. It can be measured by the refresh rate of any display device.

According to these resolutions, we may face the following types of errors in the images:

- Intensity Quantization

- Spatial Aliasing

- Temporal Aliasing

When we talk about spatial and temporal aliasing, they occur because of less resolution, but the intensity quantization occurs due to downsampling of the image and it can also be considered as quantization errors.

In the process of quantization, the errors are unavoidable but they should not be increased because it is directly proportional to the loss of information. These errors are due to limited intensity resolution. Quantization error can be randomized by the dithering process. Let’s see what it means and how it works in image processing.

What is Dithering?

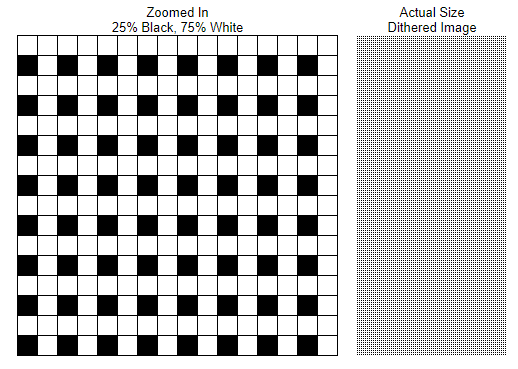

In the above sections, we had an introduction to the quantization and errors. We can minimize the quantization error by applying noise, and this intentionally applied form of noise can be considered as dithering. More formally, we can say that using it, we can allow a bitmap (method of representing an image) to display more colours than the image possesses. The word dither refers to a random or semi-random perturbation of the pixel values.

In computer vision, it is a process to create the illusion of colour depth in an image on a system with a limited colour palette. Colours that are not available in the palette can be approximated by the fusion of coloured pixels from within the available palette. The image below is a representation of the illusion of grey light using the black and white colour.

We use dithering to reduce the effect of quantization by distributing the errors among pixels of

the image.

Various Dithering Processes

There can be various kinds of dithering processes. These include:

- Random Dithering

- Average Dithering

- Ordered Dithering

- Floyd-Steinberg Dithering

Random dither: Random dither can be considered as the process of converting a grayscale image into a black and white or monochrome image. The reason for being random is that the process works by randomly choosing the pixel values in the image. If the pixel value is greater than the random pixel value then it becomes white, and if not then the place of the image becomes black.

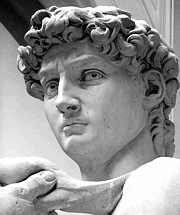

Normally in random dithering, the intentionally applied noise is of high frequency and by applying them we produce images that look like badly tuned tv pictures. This type of dithering produces better results with the image having less information. The image below is a representation of the random dithering on a grayscale image.

Average Dithering: This is similar to random dithering because it converts the grayscale image into a black and white image. But one thing which makes it different from the random dithering is the process of choosing the average threshold value from the pixel value. This threshold value is compared to the other pixels of the image. If pixels having less value than the threshold becomes black and if the pixels have a value higher than the threshold becomes white.

The above image is a representation of an image after average dithering. After this dithering, we expect to introduce visible contour artifacts.

Ordered Dithering: This type of dithering process is also used for the conversion of colored images into monochrome. But this process works by choosing a different pattern from the image depending on the color presented in the working area of the image. The below image is the representation of this type of dithering.

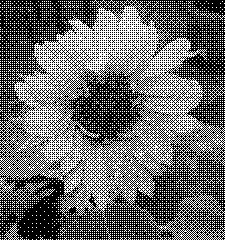

Floyd-Steinberg Dithering: it is similar to ordered dithering. The process works by choosing different patterns from the image but these patterns are relatively few repeated patterns from the colors of the image. It can be used with both gray-scale and monochromic images. The below image is a representation of results from Floyd-Steinberg Dithering. We can use it in place of ordered dithering for representing the image with richer information than ordered dithering.

Application of Dithering

In the above section, we have discussed an introduction about dithering and its type. There are various applications of it we see in real life. Some of the applications of dithering are as follows:

- Displaying graphics accurately is one of the most common applications of dithering. For example, we can display an image containing millions of colours in hardware capable of displaying 256 colours at a time using dithering.

- When it comes to saving memory and space on the disk, dithering can be helpful by avoiding the banding. There are also various benefits like dithering makes the data transfer fast by reducing the sizes of signals and images.

- GIF can be considered as a small size video where the images are chained to show the variation in the frame. Dithering becomes very useful in the creation of gifs because it is helpful in image size reduction which provides proper speed to the variety of images in the frame and is also helpful in managing the image quality.

- We can use the dithering procedure to avoid colour banding. Let’s take an example of a colour palette capable of showing only two shades of red and an image with 16 shades of red is required to display. We can use Dithering to create gradients by varying the distance between these shades to display the image.

Final Words

In this article, we had an introduction to quantization along with a discussion about the quantization errors. After that, we have seen how dithering can help in minimizing the quantization errors and what are the different approaches we can use to perform it. In the end, we also went through some applications of dithering.

You must be logged in to post a comment.