Sixty-five years ago, 10 computer scientists convened in Dartmouth, NH, for a workshop on artificial intelligence, defined a year earlier in the proposal for the workshop as “making a machine behave in ways that would be called intelligent if a human were so behaving.”

It was the event that “initiated AI as a research discipline,” which grew to encompass multiple approaches, from the symbolic AI of the 1950s and 1960s to the statistical analysis and machine learning of the 1970s and 1980s to today’s deep learning, the statistical analysis of “big data.” But the preoccupation with developing practical methods for making machines behave as if they were humans emerged already 7 centuries ago.

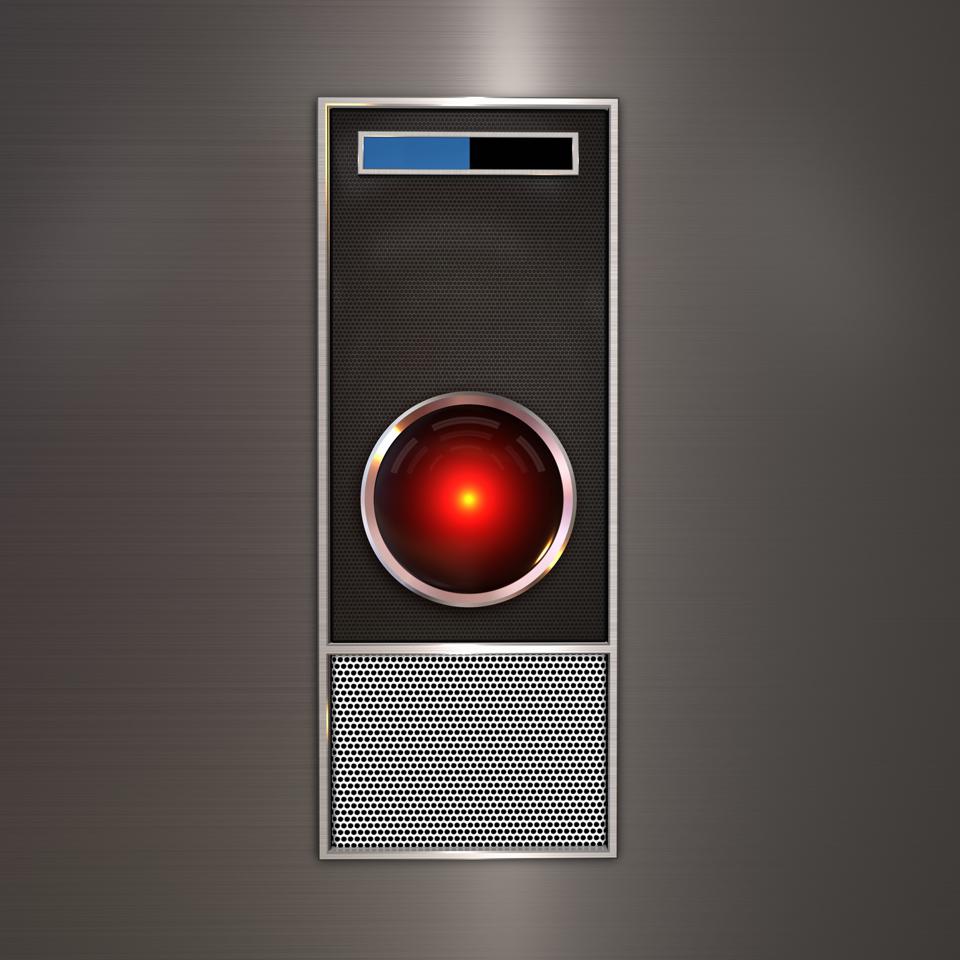

HAL (Heuristically programmed ALgorithmic computer) 9000, a sentient artificial general intelligence … [+]

getty

1308 Catalan poet and theologian Ramon Llull publishes Ars generalis ultima (The Ultimate General Art), further perfecting his method of using paper-based mechanical means to create new knowledge from combinations of concepts.

1666 Mathematician and philosopher Gottfried Leibniz publishes Dissertatio de arte combinatoria (On the Combinatorial Art), following Ramon Llull in proposing an alphabet of human thought and arguing that all ideas are nothing but combinations of a relatively small number of simple concepts.

1726 Jonathan Swift publishes Gulliver’s Travels, which includes a description of the Engine, a machine on the island of Laputa (and a parody of Llull’s ideas): “a Project for improving speculative Knowledge by practical and mechanical Operations.” By using this “Contrivance,” “the most ignorant Person at a reasonable Charge, and with a little bodily Labour, may write Books in Philosophy, Poetry, Politicks, Law, Mathematicks, and Theology, with the least Assistance from Genius or study.”

MORE FOR YOU

1755 Samuel Johnson defines intelligence in A Dictionary of the English Language as “Commerce of information; notice; mutual communication; account of things distant or discreet.”

1763 Thomas Bayes develops a framework for reasoning about the probability of events. Bayesian inference will become a leading approach in machine learning.

1854 George Boole argues that logical reasoning could be performed systematically in the same manner as solving a system of equations.

1865 Richard Millar Devens describes in the Cyclopædia of Commercial and Business Anecdotes how the banker Sir Henry Furnese profited by receiving and acting upon information prior to his competitors: “Throughout Holland, Flanders, France, and Germany, he maintained a complete and perfect train of business intelligence.”

1898 At an electrical exhibition in the recently completed Madison Square Garden, Nikola Tesla demonstrates the world’s first radio-controlled vessel. The boat was equipped with, as Tesla described it, “a borrowed mind.”

1910 Belgian lawyers Paul Otlet and Henri La Fontaine establish the Mundaneum where they wanted to gather together all the world’s knowledge and classify it according to their Universal Decimal Classification.

1914 The Spanish engineer Leonardo Torres y Quevedo demonstrates the first chess-playing machine, capable of king and rook against king endgames without any human intervention.

1921 Czech writer Karel Čapek introduces the word “robot” in his play R.U.R. (Rossum’s Universal Robots). The word “robot” comes from the word “robota” (work).

1925 Houdina Radio Control releases a radio-controlled driverless car, travelling the streets of New York City.

1927 The science-fiction film Metropolis is released. It features a robot double of a peasant girl, Maria, which unleashes chaos in Berlin of 2026—it was the first robot depicted on film, inspiring the Art Deco look of C-3PO in Star Wars.

1929 Makoto Nishimura designs Gakutensoku, Japanese for “learning from the laws of nature,” the first robot built in Japan. It could change its facial expression and move its head and hands via an air pressure mechanism.

1937 British science fiction writer H.G. Wells predicts that “the whole human memory can be, and probably in short time will be, made accessible to every individual” and that “any student, in any part of the world, will be able to sit with his [microfilm] projector in his own study at his or her convenience to examine any book, any document, in an exact replica.”

1943 Warren S. McCulloch and Walter Pitts publish “A Logical Calculus of the Ideas Immanent in Nervous Activity” in the Bulletin of Mathematical Biophysics. This influential paper, in which they discussed networks of idealized and simplified artificial “neurons” and how they might perform simple logical functions, will become the inspiration for computer-based “neural networks” (and later “deep learning”) and their popular description as “mimicking the brain.”

1947 Statistician John W. Tukey coins the term “bit” to designate a binary digit, a unit of information stored in a computer.

1949 Edmund Berkeley publishes Giant Brains: Or Machines That Think in which he writes: “Recently there have been a good deal of news about strange giant machines that can handle information with vast speed and skill….These machines are similar to what a brain would be if it were made of hardware and wire instead of flesh and nerves… A machine can handle information; it can calculate, conclude, and choose; it can perform reasonable operations with information. A machine, therefore, can think.”

1949 Donald Hebb publishes Organization of Behavior: A Neuropsychological Theory in which he proposes a theory about learning based on conjectures regarding neural networks and the ability of synapses to strengthen or weaken over time.

1950 Claude Shannon’s “Programming a Computer for Playing Chess” is the first published article on developing a chess-playing computer program.

1950 Alan Turing publishes “Computing Machinery and Intelligence” in which he proposes “the imitation game” which will later become known as the “Turing Test.”

1951 Marvin Minsky and Dean Edmunds build SNARC (Stochastic Neural Analog Reinforcement Calculator), the first artificial neural network, using 3000 vacuum tubes to simulate a network of 40 neurons.

1952 Arthur Samuel develops the first computer checkers-playing program and the first computer program to learn on its own.

August 31, 1955 The term “artificial intelligence” is coined in a proposal for a “2 month, 10 man study of artificial intelligence” submitted by John McCarthy (Dartmouth College), Marvin Minsky (Harvard University), Nathaniel Rochester (IBM), and Claude Shannon (Bell Telephone Laboratories). The workshop, which took place a year later, in July and August 1956, is generally considered as the official birthdate of the new field.

December 1955 Herbert Simon and Allen Newell develop the Logic Theorist, the first artificial intelligence program, which eventually would prove 38 of the first 52 theorems in Whitehead and Russell’s Principia Mathematica.

1957 Frank Rosenblatt develops the Perceptron, an early artificial neural network enabling pattern recognition based on a two-layer computer learning network. The New York Times reported the Perceptron to be “the embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence.” The New Yorker called it a “remarkable machine… capable of what amounts to thought.”

1957 In the movie Desk Set, when a “methods engineer” (Spencer Tracy) installs the fictional computer EMERAC, the head librarian (Katharine Hepburn) tells her anxious colleagues in the research department: “They can’t build a machine to do our job; there are too many cross-references in this place.”

1958 Hans Peter Luhn publishes “A Business Intelligence System” in the IBM Journal of Research and Development. It describes an “automatic method to provide current awareness services to scientists and engineers.”

1958 John McCarthy develops programming language Lisp which becomes the most popular programming language used in artificial intelligence research.

1959 Arthur Samuel coins the term “machine learning,” reporting on programming a computer “so that it will learn to play a better game of checkers than can be played by the person who wrote the program.”

1959 Oliver Selfridge publishes “Pandemonium: A paradigm for learning” in the Proceedings of the Symposium on Mechanization of Thought Processes, in which he describes a model for a process by which computers could recognize patterns that have not been specified in advance.

1959 John McCarthy publishes “Programs with Common Sense” in the Proceedings of the Symposium on Mechanization of Thought Processes, in which he describes the Advice Taker, a program for solving problems by manipulating sentences in formal languages with the ultimate objective of making programs “that learn from their experience as effectively as humans do.”

1961 The first industrial robot, Unimate, starts working on an assembly line in a General Motors plant in New Jersey.

1961 James Slagle develops SAINT (Symbolic Automatic INTegrator), a heuristic program that solved symbolic integration problems in freshman calculus.

1962 Statistician John W. Tukey writes in the Future of Data Analysis: “Data analysis, and the parts of statistics which adhere to it, must…take on the characteristics of science rather than those of mathematics… data analysis is intrinsically an empirical science.”

1964 Daniel Bobrow completes his MIT PhD dissertation titled “Natural Language Input for a Computer Problem Solving System” and develops STUDENT, a natural language understanding computer program.

August 16, 1964 Isaac Asimov writes in the New York Times: “The I.B.M. exhibit at the [1964 World’s Fair]… is dedicated to computers, which are shown in all their amazing complexity, notably in the task of translating Russian into English. If machines are that smart today, what may not be in the works 50 years hence? It will be such computers, much miniaturized, that will serve as the ‘brains’ of robots… Communications will become sight-sound and you will see as well as hear the person you telephone. The screen can be used not only to see the people you call but also for studying documents and photographs and reading passages from books.”

1965 Herbert Simon predicts that “machines will be capable, within twenty years, of doing any work a man can do.”

1965 Hubert Dreyfus publishes “Alchemy and AI,” arguing that the mind is not like a computer and that there were limits beyond which AI would not progress.

1965 I.J. Good writes in “Speculations Concerning the First Ultraintelligent Machine” that “the first ultraintelligent machine is the last invention that man need ever make, provided that the machine is docile enough to tell us how to keep it under control.”

1965 Joseph Weizenbaum develops ELIZA, an interactive program that carries on a dialogue in English language on any topic. Weizenbaum, who wanted to demonstrate the superficiality of communication between man and machine, was surprised by the number of people who attributed human-like feelings to the computer program.

1965 Edward Feigenbaum, Bruce G. Buchanan, Joshua Lederberg, and Carl Djerassi start working on DENDRAL at Stanford University. The first expert system, it automated the decision-making process and problem-solving behavior of organic chemists, with the general aim of studying hypothesis formation and constructing models of empirical induction in science.

1966 Shakey the robot is the first general-purpose mobile robot to be able to reason about its own actions. In a Life magazine 1970 article about this “first electronic person,” Marvin Minsky is quoted saying with “certitude”: “In from three to eight years we will have a machine with the general intelligence of an average human being.”

1968 The film 2001: Space Odyssey is released, featuring HAL 9000, a sentient computer.

1968 Terry Winograd develops SHRDLU, an early natural language understanding computer program.

1969 Arthur Bryson and Yu-Chi Ho describe backpropagation as a multi-stage dynamic system optimization method. A learning algorithm for multi-layer artificial neural networks, it has contributed significantly to the success of deep learning in the 2000s and 2010s, once computing power has sufficiently advanced to accommodate the training of large networks.

1969 Marvin Minsky and Seymour Papert publish Perceptrons: An Introduction to Computational Geometry, highlighting the limitations of simple neural networks. In an expanded edition published in 1988, they responded to claims that their 1969 conclusions significantly reduced funding for neural network research: “Our version is that progress had already come to a virtual halt because of the lack of adequate basic theories… by the mid-1960s there had been a great many experiments with perceptrons, but no one had been able to explain why they were able to recognize certain kinds of patterns and not others.”

1970 The first anthropomorphic robot, the WABOT-1, is built at Waseda University in Japan. It consisted of a limb-control system, a vision system and a conversation system.

1971 Michael S. Scott Morton publishes Management Decision Systems: Computer-Based Support for Decision Making, summarizing his studies of the various ways by which computers and analytical models could assist managers in making key decisions.

1971 Arthur Miller writes in The Assault on Privacy that “Too many information handlers seem to measure a man by the number of bits of storage capacity his dossier will occupy.”

1972 MYCIN, an early expert system for identifying bacteria causing severe infections and recommending antibiotics, is developed at Stanford University.

1973 James Lighthill reports to the British Science Research Council on the state artificial intelligence research, concluding that “in no part of the field have discoveries made so far produced the major impact that was then promised,” leading to drastically reduced government support for AI research.

1976 Computer scientist Raj Reddy publishes “Speech Recognition by Machine: A Review” in the Proceedings of the IEEE, summarizing the early work on Natural Language Processing (NLP).

1978 The XCON (eXpert CONfigurer) program, a rule-based expert system assisting in the ordering of DEC’s VAX computers by automatically selecting the components based on the customer’s requirements, is developed at Carnegie Mellon University.

1979 The Stanford Cart successfully crosses a chair-filled room without human intervention in about five hours, becoming one of the earliest examples of an autonomous vehicle.

1979 Kunihiko Fukushima develops the neocognitron, a hierarchical, multilayered artificial neural network.

1980 I.A. Tjomsland applies Parkinson’s First Law to the storage industry: “Data expands to fill the space available.”

1980 Wabot-2 is built at Waseda University in Japan, a musician humanoid robot able to communicate with a person, read a musical score and play tunes of average difficulty on an electronic organ.

1981 The Japanese Ministry of International Trade and Industry budgets $850 million for the Fifth Generation Computer project. The project aimed to develop computers that could carry on conversations, translate languages, interpret pictures, and reason like human beings.

1981 The Chinese Association for Artificial Intelligence (CAAI) is established.

1984 Electric Dreams is released, a film about a love triangle between a man, a woman and a personal computer.

1984 At the annual meeting of AAAI, Roger Schank and Marvin Minsky warn of the coming “AI Winter,” predicting an immanent bursting of the AI bubble (which did happen three years later), similar to the reduction in AI investment and research funding in the mid-1970s.

1985 The first business intelligence system is developed for Procter & Gamble by Metaphor Computer Systems to link sales information and retail scanner data.

1986 First driverless car, a Mercedes-Benz van equipped with cameras and sensors, built at Bundeswehr University in Munich under the direction of Ernst Dickmanns, drives up to 55 mph on empty streets.

October 1986 David Rumelhart, Geoffrey Hinton, and Ronald Williams publish ”Learning representations by back-propagating errors,” in which they describe “a new learning procedure, back-propagation, for networks of neuron-like units.”

1987 The video Knowledge Navigator, accompanying Apple CEO John Sculley’s keynote speech at Educom, envisions a future in which “knowledge applications would be accessed by smart agents working over networks connected to massive amounts of digitized information.”

1988 Judea Pearl publishes Probabilistic Reasoning in Intelligent Systems. His 2011 Turing Award citation reads: “Judea Pearl created the representational and computational foundation for the processing of information under uncertainty. He is credited with the invention of Bayesian networks, a mathematical formalism for defining complex probability models, as well as the principal algorithms used for inference in these models. This work not only revolutionized the field of artificial intelligence but also became an important tool for many other branches of engineering and the natural sciences.”

1988 Rollo Carpenter develops the chat-bot Jabberwacky to “simulate natural human chat in an interesting, entertaining and humorous manner.” It is an early attempt at creating artificial intelligence through human interaction.

1988 Members of the IBM T.J. Watson Research Center publish “A statistical approach to language translation,” heralding the shift from rule-based to probabilistic methods of machine translation, and reflecting a broader shift to “machine learning” based on statistical analysis of known examples, not comprehension and “understanding” of the task at hand (IBM’s project Candide, successfully translating between English and French, was based on 2.2 million pairs of sentences, mostly from the bilingual proceedings of the Canadian parliament).

1988 Marvin Minsky and Seymour Papert publish an expanded edition of their 1969 book Perceptrons. In “Prologue: A View from 1988” they wrote: “One reason why progress has been so slow in this field is that researchers unfamiliar with its history have continued to make many of the same mistakes that others have made before them.”

1989 Yann LeCun and other researchers at AT&T Bell Labs successfully apply a backpropagation algorithm to a multi-layer neural network, recognizing handwritten ZIP codes. Given the hardware limitations at the time, it took about 3 days to train the network, still a significant improvement over earlier efforts.

March 1989 Tim Berners-Lee writes “Information Management: A Proposal,” and circulates it at CERN.

1990 Rodney Brooks publishes “Elephants Don’t Play Chess,” proposing a new approach to AI—building intelligent systems, specifically robots, from the ground up and on the basis of ongoing physical interaction with the environment: “The world is its own best model… The trick is to sense it appropriately and often enough.”

October 1990 Tim Berners-Lee begins writing code for a client program, a browser/editor he calls WorldWideWeb, on his new NeXT computer.

1993 Vernor Vinge publishes “The Coming Technological Singularity,” in which he predicts that “within thirty years, we will have the technological means to create superhuman intelligence. Shortly after, the human era will be ended.”

September 1994 BusinessWeek publishes a cover story on “Database Marketing”: “Companies are collecting mountains of information about you, crunching it to predict how likely you are to buy a product, and using that knowledge to craft a marketing message precisely calibrated to get you to do so…. many companies believe they have no choice but to brave the database-marketing frontier.”

1995 Richard Wallace develops the chatbot A.L.I.C.E (Artificial Linguistic Internet Computer Entity), inspired by Joseph Weizenbaum’s ELIZA program, but with the addition of natural language sample data collection on an unprecedented scale, enabled by the advent of the Web.

1997 Sepp Hochreiter and Jürgen Schmidhuber propose Long Short-Term Memory (LSTM), a type of a recurrent neural network used today in handwriting recognition and speech recognition.

October 1997 Michael Cox and David Ellsworth publish “Application-controlled demand paging for out-of-core visualization” in the Proceedings of the IEEE 8th conference on Visualization. They start the article with “Visualization provides an interesting challenge for computer systems: data sets are generally quite large, taxing the capacities of main memory, local disk, and even remote disk. We call this the problem of big data. When data sets do not fit in main memory (in core), or when they do not fit even on local disk, the most common solution is to acquire more resources.” It is the first article in the ACM digital library to use the term “big data.”

1997 Deep Blue becomes the first computer chess-playing program to beat a reigning world chess champion.

1998 The first Google index has 26 million Web pages.

1998 Dave Hampton and Caleb Chung create Furby, the first domestic or pet robot.

1998 Yann LeCun, Yoshua Bengio and others publish papers on the application of neural networks to handwriting recognition and on optimizing backpropagation.

October 1998 K.G. Coffman and Andrew Odlyzko publish “The Size and Growth Rate of the Internet.” They conclude that “the growth rate of traffic on the public Internet, while lower than is often cited, is still about 100% per year, much higher than for traffic on other networks. Hence, if present growth trends continue, data traffic in the U. S. will overtake voice traffic around the year 2002 and will be dominated by the Internet.”

2000 Google’s index of the Web reaches the one-billion mark.

2000 MIT’s Cynthia Breazeal develops Kismet, a robot that could recognize and simulate emotions.

2000 Honda’s ASIMO robot, an artificially intelligent humanoid robot, is able to walk as fast as a human, delivering trays to customers in a restaurant setting.

October 2000 Peter Lyman and Hal R. Varian at UC Berkeley publish “How Much Information?” It is the first comprehensive study to quantify, in computer storage terms, the total amount of new and original information (not counting copies) created in the world annually and stored in four physical media: paper, film, optical (CDs and DVDs), and magnetic. The study finds that in 1999, the world produced about 1.5 exabytes of unique information, or about 250 megabytes for every man, woman, and child on earth. It also finds that “a vast amount of unique information is created and stored by individuals” (what it calls the “democratization of data”) and that “not only is digital information production the largest in total, it is also the most rapidly growing.” Calling this finding “dominance of digital,” Lyman and Varian state that “even today, most textual information is ‘born digital,’ and within a few years this will be true for images as well.” A similar study conducted in 2003 by the same researchers found that the world produced about 5 exabytes of new information in 2002 and that 92% of the new information was stored on magnetic media, mostly in hard disks.

2001 A.I. Artificial Intelligence is released, a Steven Spielberg film about David, a childlike android uniquely programmed with the ability to love.

2003 Paro, a therapeutic robot baby harp seal designed by Takanori Shibata of the Intelligent System Research Institute of Japan’s AIST is selected as a “Best of COMDEX” finalist

2004 The first DARPA Grand Challenge, a prize competition for autonomous vehicles, is held in the Mojave Desert. None of the autonomous vehicles finished the 150-mile route.

2006 Oren Etzioni, Michele Banko, and Michael Cafarella coin the term “machine reading,” defining it as an inherently unsupervised “autonomous understanding of text.”

2006 Geoffrey Hinton publishes “Learning Multiple Layers of Representation,” summarizing the ideas that have led to “multilayer neural networks that contain top-down connections and training them to generate sensory data rather than to classify it,” i.e., the new approaches to deep learning.

2006 The Dartmouth Artificial Intelligence Conference: The Next Fifty Years (AI@50), commemorates the 50th anniversary of the 1956 workshop. The conference director concludes: “Although AI has enjoyed much success over the last 50 years, numerous dramatic disagreements remain within the field. Different research areas frequently do not collaborate, researchers utilize different methodologies, and there still is no general theory of intelligence or learning that unites the discipline.”

2007 Fei-Fei Li and colleagues at Princeton University start to assemble ImageNet, a large database of annotated images designed to aid in visual object recognition software research.

2007 John F. Gantz, David Reinsel and other researchers at IDC release a white paper titled “The Expanding Digital Universe: A Forecast of Worldwide Information Growth through 2010.” It is the first study to estimate and forecast the amount of digital data created and replicated each year. IDC estimates that in 2006, the world created 161 exabytes of data and forecasts that between 2006 and 2010, the information added annually to the digital universe will increase more than six fold to 988 exabytes, or doubling every 18 months. According to the 2010 and 2012 releases of the same study, the amount of digital data created annually surpassed this forecast, reaching 1,227 exabytes in 2010, and growing to 2837 exabytes in 2,012. In 2020, IDC estimated that 59,000 exabytes of data will be created, captured, copied, and consumed in the world that year.

2009 Hal Varian, Google’s Chief Economist, tells the McKinsey Quarterly: “I keep saying the sexy job in the next ten years will be statisticians. People think I’m joking, but who would’ve guessed that computer engineers would’ve been the sexy job of the 1990s? The ability to take data—to be able to understand it, to process it, to extract value from it, to visualize it, to communicate it—that’s going to be a hugely important skill in the next decades.”

2009 Mike Driscoll writes in “The Three Sexy Skills of Data Geeks”: “…with the Age of Data upon us, those who can model, munge, and visually communicate data—call us statisticians or data geeks—are a hot commodity.”

2009 Rajat Raina, Anand Madhavan and Andrew Ng publish “Large-scale Deep Unsupervised Learning using Graphics Processors,” arguing that “modern graphics processors far surpass the computational capabilities of multicore CPUs, and have the potential to revolutionize the applicability of deep unsupervised learning methods.”

2009 Google starts developing, in secret, a driverless car. In 2014, it became the first to pass, in Nevada, a U.S. state self-driving test.

2009 Computer scientists at the Intelligent Information Laboratory at Northwestern University develop Stats Monkey, a program that writes sport news stories without human intervention.

2010 Launch of the ImageNet Large Scale Visual Recognition Challenge (ILSVCR), an annual AI object recognition competition.

2010 Kenneth Cukier writes in The Economist Special Report ”Data, Data Everywhere“: ”… a new kind of professional has emerged, the data scientist, who combines the skills of software programmer, statistician and storyteller/artist to extract the nuggets of gold hidden under mountains of data.”

2011 Martin Hilbert and Priscila Lopez publish “The World’s Technological Capacity to Store, Communicate, and Compute Information” in Science. They estimate that the world’s information storage capacity grew at a compound annual growth rate of 25% per year between 1986 and 2007. They also estimate that in 1986, 99.2% of all storage capacity was analog, but in 2007, 94% of storage capacity was digital, a complete reversal of roles (in 2002, digital information storage surpassed non-digital for the first time).

2011 A convolutional neural network wins the German Traffic Sign Recognition competition with 99.46% accuracy (vs. humans at 99.22%).

2011 Watson, a natural language question answering computer, competes on Jeopardy! and defeats two former champions.

2011 Researchers at the IDSIA in Switzerland report a 0.27% error rate in handwriting recognition using convolutional neural networks, a significant improvement over the 0.35%-0.40% error rate in previous years.

June 2012 Jeff Dean and Andrew Ng report on an experiment in which they showed a very large neural network 10 million unlabeled images randomly taken from YouTube videos, and “to our amusement, one of our artificial neurons learned to respond strongly to pictures of… cats.”

September 2012 Tom Davenport and D.J. Patil publish “Data Scientist: The Sexiest Job of the 21st Century” in the Harvard Business Review.

October 2012 A convolutional neural network designed by researchers at the University of Toronto achieveS an error rate of only 16% in the ImageNet Large Scale Visual Recognition Challenge, a significant improvement over the 25% error rate achieved by the best entry the year before.

March 2016 Google DeepMind’s AlphaGo defeats Go champion Lee Sedol.

2019 The number of Internet users worldwide surpasses 4 billion.

March 2019 The Association for Computing Machinery (ACM) names Yoshua Bengio, Geoffrey Hinton, and Yann LeCun recipients of the 2018 ACM A.M. Turing Award for conceptual and engineering breakthroughs that have made deep neural networks a critical component of computing. “Artificial intelligence is now one of the fastest-growing areas in all of science and one of the most talked-about topics in society,” said ACM President Cherri M. Pancake. “The growth of and interest in AI is due, in no small part, to the recent advances in deep learning for which Bengio, Hinton and LeCun laid the foundation. These technologies are used by billions of people.”